When it comes to your infrastructure, whether in the home or at work you really don’t want to mess around with storage. It’s where your precious pictures, media and business files reside. Commonly storage has resided on the computer’s internal disk. This has always been a risky proposition with little in the way of protection should the internal disk fail.

External storage had a brief surge of popularity with plasticky USB drives for $99 sprouting everywhere. Some of them even offered two drives in a mirrored configuration in case one drive died. But as with most things you get what you pay for and in the case of storage you’d be far better off saving up a few hundred more and buying a network attached storage appliance/NAS. In particular a NAS which supports two or more hard drives for redundancy.

You will most certainly want your NAS to offer redundancy in case a hard disk dies. If you’re a business user you’ll want redundant power supplies as well as redundant network links in case either of those fails (having a non-redundant power supply fail on a Friday evening is no fun I can tell you so redundant supplies are indespensible if uptime is remotely important). Redundant network links are less critical unless you need 100% uptime and then you’ll really want to factor in the cost of dual network switches to split each link. At that point though you should probably be getting your advice from NetApp or Dell/EMC since the setup, compatibility and configurations of all those components can be pretty tricky and nothing really beats that snug cozy feeling of a vendor validated solution.

That being the case, I think it worth chronicling my storage experiences as a technical user.

My usages are pretty diverse but encompass the following requirements:

- Host 5-10 virtual machines via NFS

- Host 2TB of RAW image files and JPEGs

- Host 3TB of ripped DVDs (bought and paid for of course…)

- Host 500GB of music (also bought and paid for)

- Host 500GB of roaming profiles and other miscellany

- Host Apple Time Machine backups

In addition I think any good storage appliance should also offer:

- Web browser apps to access files and media

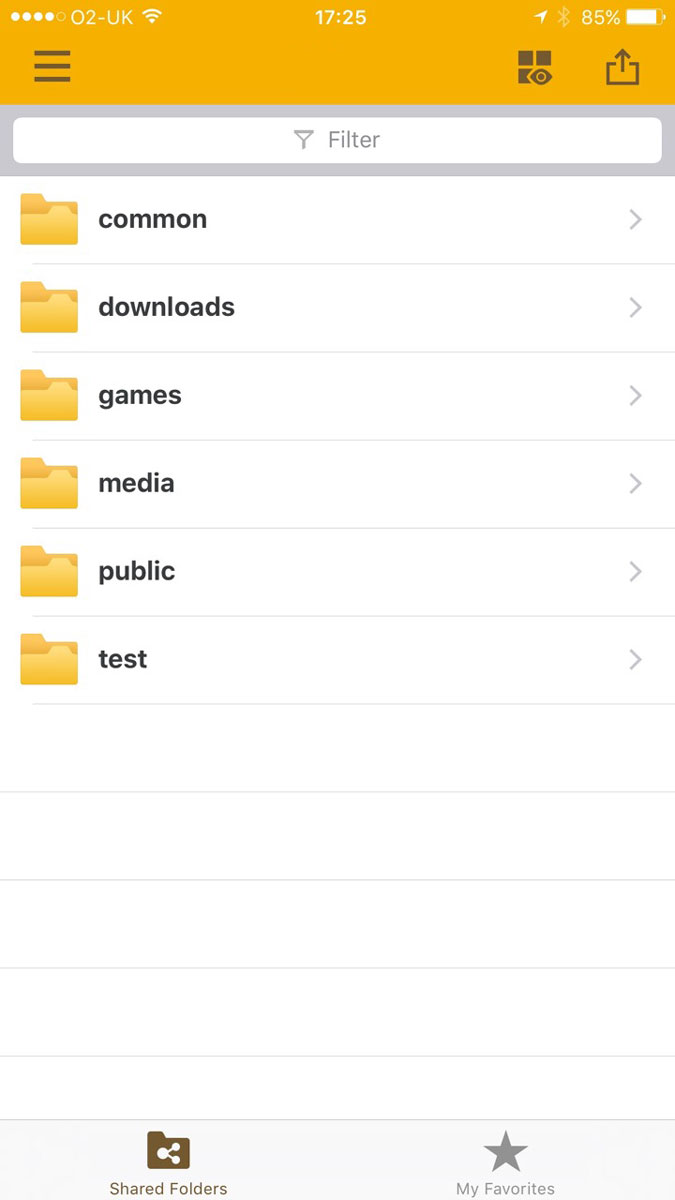

- Mobile apps to access files and media

- Support for user quotas

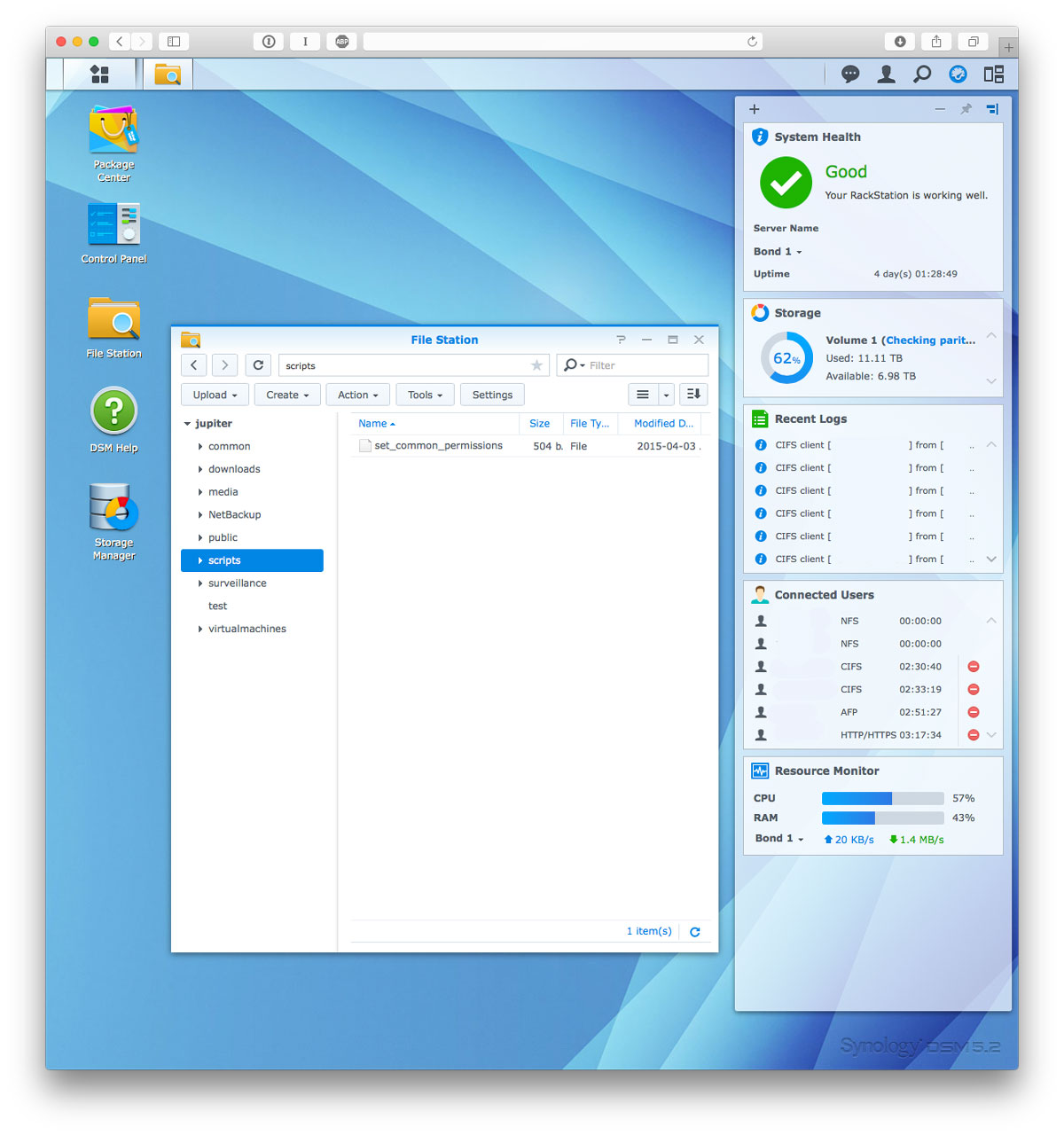

At the moment Synology’s range of Rackstation/Diskstation products stand head and shoulders above everyone else’s in my experience. They support all the items listed above and then some. In addition the quality of their software, be it their DSM operating system which runs on the NAS or their mobile apps are all fairly uniform in their quality. I can’t remember ever having to reboot my Synology for any sort of issue whereas my Netgear ReadyNAS needed a weekly restart to prevent it from becoming flaky and sluggish.

Synology’s range of devices also consume very little electricity. This is a big factor when considering a NAS since they’re designed to be on 24/7.

The 10 bay rackmount unit I have for instance consumes just 36W on idle and 112W on load. Their 8 bay desktop unit (DS1815+) consumes even less at 25W on idle and 46W on load.

In terms of noise the 8 bay units are whisper quiet at 24 dB(A) and their larger rackmount offerings whilst not silent only register at 42 dB(A) which is about the same as a quiet desk fan.

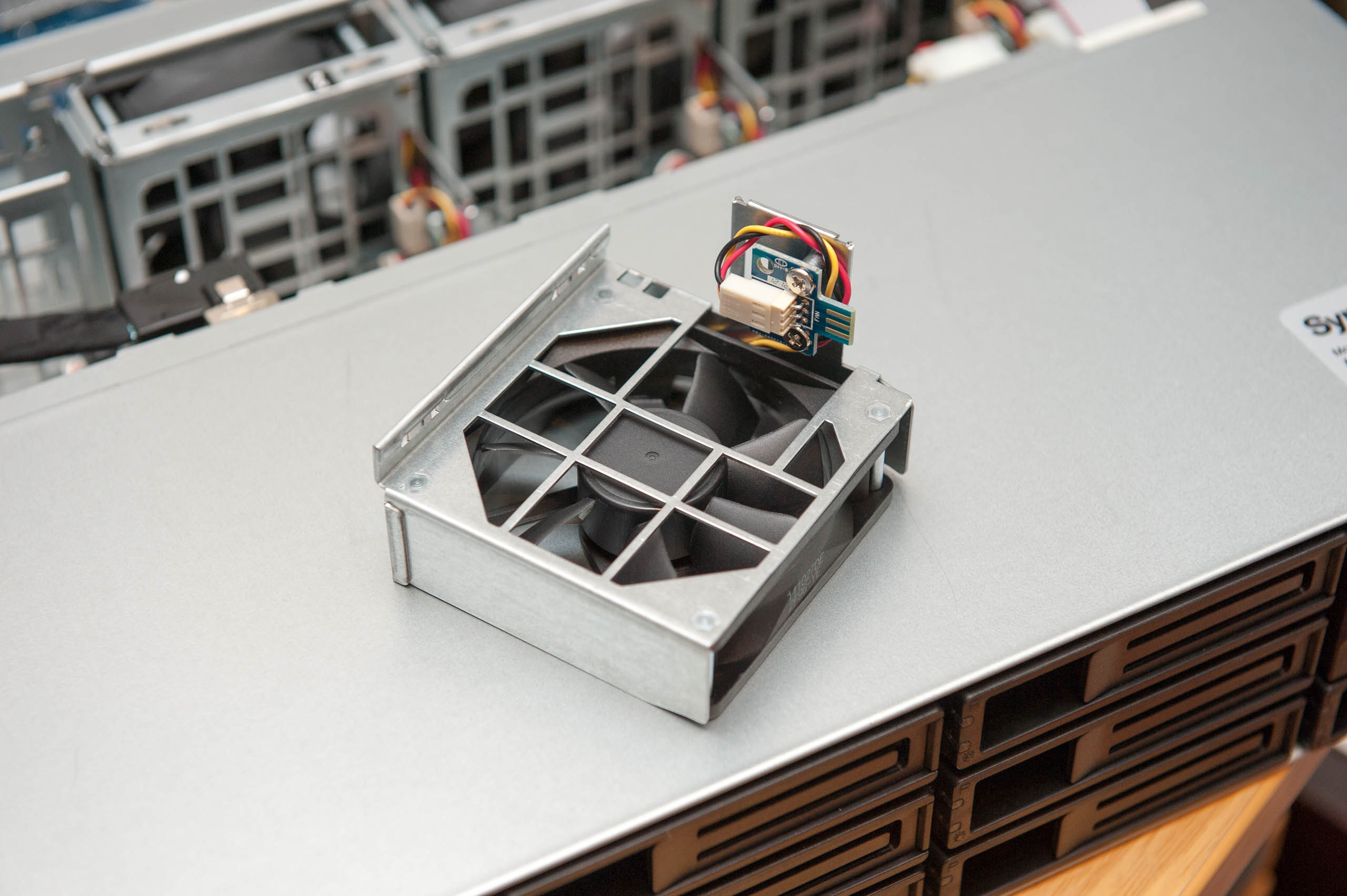

Having built storage servers myself it’s very hard to get these kinds of power and noise efficiencies without spending a lot of money or time on special cooling fans, fiddly fan adapters or even fiddlier cpu frequency and fan configurations.

The Synology NAS I plumped for is a little old by their current standards so expect everything to be a little better looking in their latest models. I went for the RS2212+:

The web interface:

Mobile app interface:

If this sounds like an advert for Synology it’s because I really can’t recommend them highly enough. They tick every box. Except one: Bit Rot¹.

Bit Rot

Bit Rot is one of those things which slow eats away at the integrity of your file data as it sits on your filesystem. Over time random bits of data in your files get flipped.

This could be caused by memory errors, firmware bugs in your storage controller and hard drive or a dodgy cable. Files slowly get their bits flipped until enough of the file is ‘rotted’ away that the problem rears its ugly head as visible corruption. This could surface in a JPEG with a segment of the file scrambled. In an audio file it could manifest as odd digital noises. At worst you won’t be able to open the file at all. The big issue with bit rot is that since it’s silent and your filesystem isn’t aware of it, the data is backed up as is and your previously clean backup is now a copy of the rotten version of the file.

Most filesystems don’t take care of this problem. Even modern filesystems like ext4 and NTFS are affected.

There is however one major filesystem which was designed from the get go with data integrity as one of it’s key design goals. That filesystem is ZFS.

ZFS

ZFS is perhaps the holy grail of filesystems. It not only resolves issues of data integrity but offers excellent storage pooling and superlative performance².

Rather uniquely ZFS supports highly configurable write and read caches which can be configured to run on ultra fast SSDs or even battery backed memory storage cards. In addition it supports interesting redundancy options like RAID-Z1, RAID-Z2 and RAID-Z3 each of which allow the failure of 1, 2 or 3 hard drives respectively.

It also supports snapshots which capture file differences over time (similar in function to Apple’s Time Machine), on the fly compression and deduplication which attempts to detect duplicate files and hence save space.

On top of all that it’s open source.

So what’s the catch?

Firstly ZFS has pretty demanding system requirements. How demanding? Think 1GB of memory per TB of storage. For a decent sized 5x 4TB disk array you’re looking at 16GB of memory to provide good performance. However just like disks and bit rot you can experience bit errors in memory too. Thankfully with memory there’s an easy fix, opt for the more expensive ECC kind.

On top of that if you want to serve up anything over NFS or any network protocol using synchronous writes you better set aside $200-300 for a fast SLOG device (Separate Intent Log). An Intel SSD will do nicely.

The final issue with widespread adoption is that ZFS is licensed under CDDL (Common Development and Distribution License). Most NAS operating systems use Linux which is licensed under GPL (GNU General Public License Version 2). Problems arise when CDDL and GPL code are combined under the same binary. In the case of the Linux kernel this prevents ZFS from being distributed as part of the kernel.

Whilst it can be shipped as a binary module I suspect the main item holding back wide scale adoption of ZFS on NAS boxes are the stringent system and cost implications of so much high end hardware. Most folk are hard pressed to pay $400 for a decent 4 bay NAS let alone an extra $300 on top for lots of ECC RAM and an SSD write cache.

Recently Microsoft and the Linux community have attempted to address the problem of bit rot. In Microsoft’s case they now bundle their Resilient File System (ReFS) as part of Windows Server 2012. In the Linux community BTRFS (B-tree file system) is gaining ground and starting to ship in production systems.

BTRFS still has stringent system requirements but they’re a far cry from the requirements of ZFS. It is however still very new and only now hitting the market in the form of Synology’s business range of NAS boxes. It’ll be a while yet before it trickles down to the consumer range.

With that out of the way what storage setup did I opt for?

FreeNAS

After careful evaluation of all the various ZFS options out there I settled on FreeNAS.

FreeNAS not only offers by far the most polished ZFS distribution it also has a great community which you really are going to need if you don’t want to mess this up (and despite that I did – at first).

In addition to the community FreeNAS also has a commercial offering called TrueNAS which by all accounts rocks it in the performance department since every component has been carefully selected to ensure optimal system setup.

Now that last line there might sound like market speak but in the case of ZFS you really need to pay attention to the FreeNAS system recommendations.

Firstly you’ll want to allocate 1GB of memory per 1TB of storage for optimum performance. So if you’re planning on 16TB of storage you better opt for 16GB of RAM. Depending on your usage 16GB of memory could last you a good long while. However if running things like virtual machines or high I/O loads you’ll want to opt for more like 32GB or even 64GB of memory.

In addition if you’re going to be running virtual machines over NFS or any NFS heavy transactions with synched writes then you’re going to want a SLOG device. Basically a really fast SSD with a super capacitor in case of power shutdown.

Long and short of it is to buy Intel.

I kid ye not. I’ve been through Crucial, Samsung and…well, that’s it really, but I’ve been through several of every one of their SSDs and if rock solid reliability is what you’re after go Intel. Everytime. Seriously. Not even kidding.

Besides your SLOG device doesn’t need to be super large, it just needs to have a high write speed and IO per second count. Be warned though, smaller SSDs typically have fewer flash chips on them so tend to be slower than larger SSDs which benefit from the increased parallel access that the extra multiple chips afford.

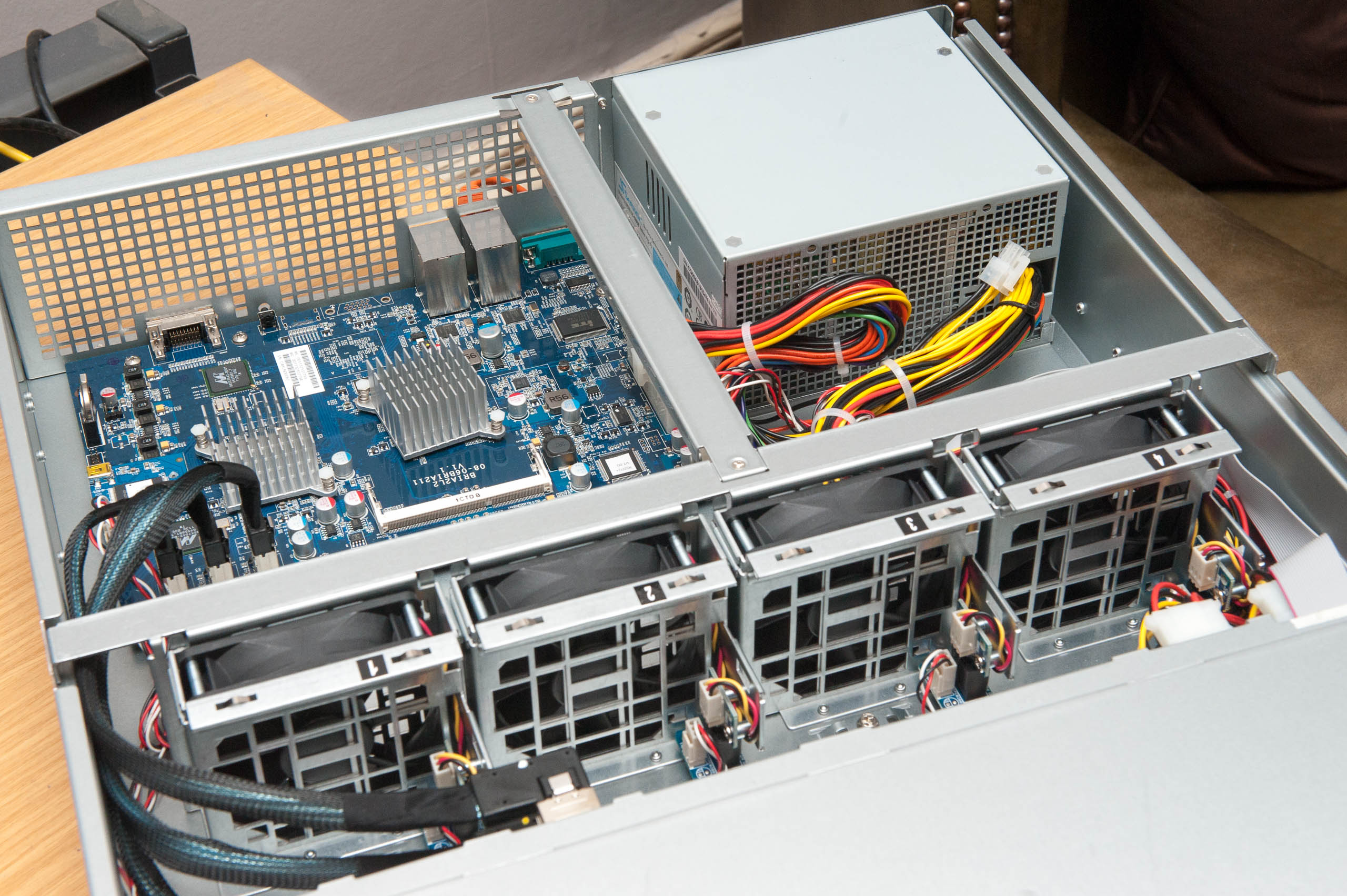

The next step is to pick a CPU. As luck would have it, whilst heavy on the wallet in memory and storage ZFS only needs a modest CPU to operate. Note: Make sure your CPU and mainboard can handle ECC memory. Generally Intel’s Core series can’t but their Xeon series can.

With that out the way pick a chassis. I’d recommend Supermicro. First pick the number of drive bays you need (hot swap bays are preferable in a storage server). Next if noise is a concern go for their super quiet range. Next choose your power supply option. Typically Supermicro’s SuperQuiet servers come with a super quiet power supply – but if loudness isn’t a concern simply choose a redundant supply which can handle the load. If in doubt regarding the PSU size drop them a line, they’re pretty good and can even give you stats on power consumption and noise output of each chassis.

Finally…post your system configuration on the FreeNAS newbies forum so that wiser eyes than yours can tell you if some obscure LAN chip on LAN port 2 is incompatible with FreeBSD 9 etc. etc. (now who said being a system integrator isn’t fun?!)

System building is fiddly. You’ll often find little issues which can put a damper on your build. Things like that the motherboard you ordered doesn’t quite have enough clearance to accomdate your fancy heatpipe cooler. Or the auxiliary ATX power connector doesn’t quite reach the motherboard header and needs an extension. Alternatively the fans are running too loud and need fan adapters. The list goes on…

If ordering Supermicro I’d recommend having one of their resellers advise you. They’ll be able to assist in choosing the motherboard which accomodates the chassis you want. They’ll even build it all for you and ensure all the correct adapters, cables and little wiring bits are correctly in place.

Building computers can be a real pain in the ass. Let someone else do it.

¹ until very recently – and only in their high end units which finally support BTRFS.

² when your server is correctly specced and configured.